- Products

Enjoy a free 30-day trial of our

data validation software.Experience the power of trusted data

solutions today, no credit card required! - Solutions

Enjoy a free 30-day trial of our

data validation software.Experience the power of trusted data

solutions today, no credit card required! - Partners

Enjoy a free 30-day trial of our

data validation software.Experience the power of trusted data

solutions today, no credit card required! - Learn more

- Pricing

- Contact Us

How financial institutions are leveraging data for business objectives

Having a data governance program isn’t just best practice—it’s a necessity. From regulation to business intelligence, the modern financial institution relies on tremendous amounts of data to make decisions and to manage organizational risk. Yet, the sheer volume and breadth of that data can present challenges when it comes to storing, accessing, and analyzing it. Processes tend to become siloed between departments, and data becomes distributed and de-standardized. That’s why having documented policies and processes surrounding this information is critical to ensure that its quality is never compromised and that it is accessible when you need it.

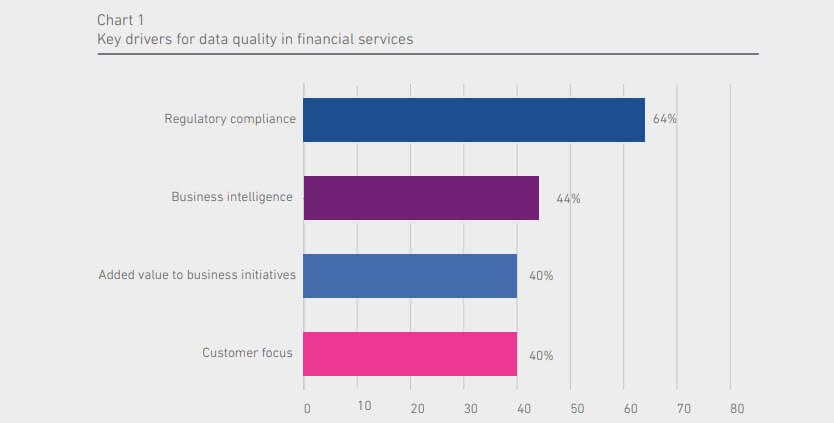

Key drivers for data quality in finance

Regulatory compliance

It goes without saying that executives at your institution understand the risks associated with non-compliance. In light of the grave personal and professional consequences that executives can face (imprisonment in the most extreme cases), it’s reasonable to assume that staying compliant with regulation is high atop their priority lists. Through our study, we found that 85 percent of financial institutions say their senior management teams have a clear understanding around the value of accurate data and the benefits of a data quality program. Further, they say regulation is the main organizational objective to which their data quality and data management programs apply.

In the financial services sector, especially, increasing pressure from regulators to report on things like aggregate risk exposure and capital adequacy means that you need to uphold the highest standards of data quality and data management. Without a good handle on your data, your institution will find it difficult to meet regulation such as Basel Committee on Banking Supervision (BCBS) 239 or the Comprehensive Capital Analysis and Review (CCAR) framework. In addition, you must be able to quickly access data from disparate systems when complying with regulation like Know Your Customer (KYC).

If data quality is starting to sound like an IT-only problem, it’s not. Regulation such as the Telephone Consumer Protection Act (TCPA) is specifically targeted at marketing practices, and it can be a big headache for organizations that have errors in their contact records. The regulation is designed to reduce the number of telemarketing calls consumers receive and mandates that they opt-in to being contacted on their phones or by SMS. If your financial institution sends account alerts or balance reminders to consumers’ mobile phones, you will want to ensure your customer records are accurate, and that you have obtained all necessary permissions. In 2017, just one TCPA violation can run you $11,000, and the typical penalty for an enterprise can run into the tens of millions of dollars.

How do you achieve this? Start by prioritizing the customer journey. Analyze the customer information already in your systems so that you can begin to understand what your customers want from your institution. Then, break down silos between your systems to achieve a consolidated, 360-degree customer view. Of course, the financial data quality you’re working with is critical to your ability to achieve these goals. That’s why 87 percent of financial institutions agree with the statement that customer experience is a critical driver behind data quality initiatives.

Data quality maturity

By and large, the financial services industry is much more mature with respect to data quality than other industries. Our study revealed that centralized data roles, such as a Chief Data Officer (CDO), for corporate-wide assets is more likely to be prevalent in companies operating in financial services (27%) compared to other sectors (13%). We view organizations with CDOs in place to be among the most advanced when it comes to data quality maturity. Furthermore, we found that organizations in the financial services sector are more likely to have a platform approach to data compared to other sectors (35% vs. 17% respectively).

It makes sense then that financial institutions see far less impact related to bad data quality compared to other industries. According to our study, 58 percent of financial institutions are negatively impacted by poor data, compared with 69 percent of other industries. Still, we believe the percentage of financial institutions suffering from poor data quality remains too high, and that they need to continue refining their approaches to data quality.

Measuring data quality

To determine the current state of your data quality, and to prove the effects of attempts to remediate bad data, you’ll need to be able to measure your data quality. Many financial institutions invest in technology that provides visual dashboards to make data quality monitoring easy. In fact, 65 percent of organizations are most likely to use technology tools that quantify the cost of bad data. Further, they tend to measure their data quality either a monthly (28%) or daily (26%).

But what if you don’t have any budget to buy these tools that give visibility into your data? How do you begin to quantify your bad data? This is often the case for smaller organizations, or for those that do not have an established data quality program. We find that most financial institutions look at compliance penalties tied directly to bad data, the cost of lost sales opportunities, and the amount of wasted time to tie a number to their bad data.

Implementing a data quality program

According to our study, 80 percent of finance organizations say poor data quality hurts their business initiatives. So it’s clear that achieving key business objectives hinges on your ability to implement an ongoing data quality program. This means investing in the right people, processes, and technology.

Learn how Schroders implemented a data quality assurance capability to overcome data accuracy issues and gained insights into complex data processes and architectures

Get the case study

Yet, while those in financial services today understand the necessity for high-quality data, for many of them, the process of developing a data quality program is harder than they expect. Of the businesses we spoke with, 54 percent said they found the process of building a business case for data quality to be difficult, and 77 percent believed that too many stakeholders were involved in the process. Given the challenges around building a business case, it’s not surprising that most financial institutions that embark on data quality programs take 6-12 months to fully implement it.

In order to expedite this processes and achieve success, it’s critical to identify some of the common challenges other organizations have encountered, and work to address them before you begin your own program. Through our study, we identified a lack of budgets and return on investment (ROI) as leading obstacles that organizations face. This is likely due to an inability to quantify the effects of poor data quality on the business, which is a common hurdle we see stakeholders face when asking for funding. Obviously, it can be difficult to determine budget and return on investment when you don’t understand the monetary impact on the business.

If you’re in a similar circumstance, building a case for a data quality program can be easy if you think about the broader effects of bad data on the business. For instance, can data quality issues be linked to wasted time? Do data quality issues make your business processes inefficient or unachievable? Can data quality We help organizations like yours improve their data quality every day. Let us help you build a business case! Use our worksheet to get started. Start my proposal issues increase risk to the business, such as regulatory risk, or negatively impact brand value? If you’re able to answer these questions, you’re well on your way to building a business case.

Conclusion

If your financial institution is putting forward a proposal for a data quality program, you will want to focus your efforts around strategic business initiatives that are specific to your organization. These might include objectives like achieving regulatory compliance, increasing revenue, improving worker productivity, enhancing the customer experience, or expanding market penetration. By focusing on strategic areas for your business, decision makers will find it much easier to see the value in your data quality program.