- Products

Enjoy a free 30-day trial of our

data validation software.Experience the power of trusted data

solutions today, no credit card required! - Solutions

Enjoy a free 30-day trial of our

data validation software.Experience the power of trusted data

solutions today, no credit card required! - Partners

Enjoy a free 30-day trial of our

data validation software.Experience the power of trusted data

solutions today, no credit card required! - Learn more

- Pricing

- Contact Us

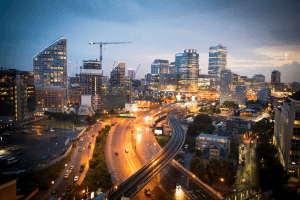

Using data to improve city services

Government agencies at every level—city, state, and federal—collect large amounts of data; that’s a fact. The challenge for many lies in the ways information is collected and processed. Much of it is locked up in departmental silos, on somewhat dated computer systems, and it’s hard to access for additional analysis or to share publicly. Much of it may have been collected on paper forms or input by busy staff with lots of other things to do. Yet in any commercial organization, your data might be considered like gold dust! Locked within those siloed systems are many nuggets of valuable information that can help improve the efficiency of your entire organization, help deliver better services, and help improve the lives of your constituents.

One example is the Housing and Development department in a prominent Florida municipality. They’ve been creating computer records within their different departments for many years. Records on building permits, inspections, assessments, property sales, property ownership, liens, variances, zoning, utility work, and so on. Each type of record is stored in a different database for use by the staff in each specific discipline. But when they need to look at the data from the perspective of an individual citizen or property, it’s nearly impossible to do. What services have been provided to the individual? What’s the overall status of the property? Ah! Can you wait while we write an SQL script to pull data from eight different databases?!

This situation repeats itself across multiple municipal government departments—revenue is organized by revenue source, not by citizen. Health and Human Services has data by program, not citizen. So how do you best determine who lives in the city, what services they consume, and what are their future needs? Surely the answer is easy, right? In today’s cloud-based, big data-driven world you just upload everything and use AI to get your answers. Wrong! Unfortunately, a good portion of the IT budget for many administrations must continue to be spent on support and maintenance of legacy systems because the costs of migrating to new, more modern platforms are unbudgeted, or because the skills required are in very limited supply.

Even when migration projects are funded, it can take extended periods of time to complete them. Finding out what data is held in existing databases and how accurate and complete the data is can be a major challenge. Mapping the old data to the structure of a new, more comprehensive, more easily searchable database takes time and expertise, but it is not hard to do. The biggest challenge is prepping the data in the old formats to make it ready for the new structure.

Remember those paper forms and busy staff? Yes, those old databases contain a mountain of errors, misspellings, duplications, different formats within each data field, data entered into the wrong fields, unique identifiers, and different field definitions used to describe the same data in different databases (e.g. name, constituent, owner, applicant can all mean the same thing). You get the picture; it’s messy. And without some kind of data microscope to look into the tens of thousands to millions of records, it will likely take your small IT team many months of coding to sort it out before they can even contemplate starting the migration.

Despite these challenges, the benefits of system modernization and leveraging the rich sources of data available to agencies are immense. Take the case of one agency that coalesced the data from over 50 different government organizations to create a single database that could be used both by the government and citizens. By bringing together data on land ownership, property use, climate, building construction, utilities, building permits and design, property ownership, assessment and tax records, geolocation, and many other attributes, they created an online searchable database that could be used by:

• Citizens contemplating buying property to determine title, taxation, property age, refurbishment, utilities, construction, and numerous other factors

• Land use planners to understand the impact of zoning and determine any need for changes

• Emergency crews to locate utility controls, hydrants, entrances and exits, surrounding properties/infrastructure, and construction materials—all while on their way to the scene

• City engineers to understand and reduce the impact of construction or repairs, changes to traffic flows, and to improve the use of public services

A more modest endeavor, nevertheless, produced a significant improvement for a local police department. They’d been collecting records from emergency calls for over a decade and had amassed thousands of duplicate or incorrect records over that time. When you’re taking notes in an emergency, it’s easy to misspell names and addresses accidentally. Yet linking data to the right person can be crucial to a case. For example, you might speak to a vulnerable person and miss that they’re a repeat victim of crime. By cleaning and augmenting their data with other data streams, they were able to merge thousands of records to create a “golden” database. Now, incoming calls prompt one or two records instead of hundreds, giving them a clear view of the caller and helping them improve the safety of their citizens.

Another benefit for agencies of having clean, accurate, and comprehensive datasets is that the analysis of potential opportunities need not be the sole responsibility of government. Open data projects make anonymized data publicly available for use by citizens and businesses alike, allowing others to investigate and come up with new ideas for the future. Often, these ideas can draw in new partners, additional services, and new sources of funding beyond taxes and fees. Data.gov now contains almost 200,000 open datasets from cities and counties across the USA.

If you are contemplating a modernization project and are daunted by the task, there are tools that can help. Modern data management tools, such as Experian Aperture Data Studio, provide speedy and highly cost effective solutions. Experian Aperture Data Studio will quickly go through the records from those legacy databases, identify fields, database errors, duplications, formatting issues, empty cells, and a host of other issues that typically take months of work to determine. Its user friendly UX requires no SQL or other coding, and its rules-based approach allows errors to be identified and fixed rapidly.

Contact our team to learn more about getting started.

Contact now